Excerpt:

“Even within the coding, it’s not working well,” said Smiley. “I’ll give you an example. Code can look right and pass the unit tests and still be wrong. The way you measure that is typically in benchmark tests. So a lot of these companies haven’t engaged in a proper feedback loop to see what the impact of AI coding is on the outcomes they care about. Lines of code, number of [pull requests], these are liabilities. These are not measures of engineering excellence.”

Measures of engineering excellence, said Smiley, include metrics like deployment frequency, lead time to production, change failure rate, mean time to restore, and incident severity. And we need a new set of metrics, he insists, to measure how AI affects engineering performance.

“We don’t know what those are yet,” he said.

One metric that might be helpful, he said, is measuring tokens burned to get to an approved pull request – a formally accepted change in software. That’s the kind of thing that needs to be assessed to determine whether AI helps an organization’s engineering practice.

To underscore the consequences of not having that kind of data, Smiley pointed to a recent attempt to rewrite SQLite in Rust using AI.

“It passed all the unit tests, the shape of the code looks right,” he said. It’s 3.7x more lines of code that performs 2,000 times worse than the actual SQLite. Two thousand times worse for a database is a non-viable product. It’s a dumpster fire. Throw it away. All that money you spent on it is worthless."

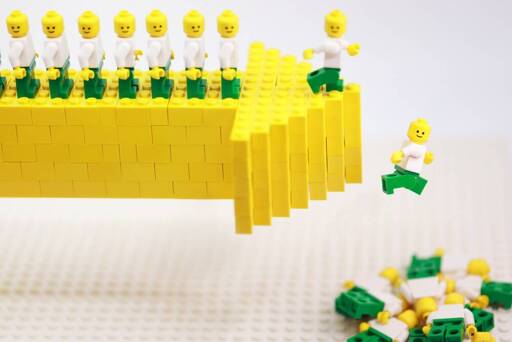

All the optimism about using AI for coding, Smiley argues, comes from measuring the wrong things.

“Coding works if you measure lines of code and pull requests,” he said. “Coding does not work if you measure quality and team performance. There’s no evidence to suggest that that’s moving in a positive direction.”

People delude themselves if they think LLMs are not useful for coding. People also delude themselves that all code will be AI written in the next 2 years. The reality is that it’s incredibly useful tool but with reasonable limits.

I think part of it is that it’s been overhyped for so long. But now Opus can actually do all the shit we were promised 2 years ago.

Recently had to call out a coworker for vibecoding all her unit tests. How did I know they were vibe coded? None of the tests had an assertion, so they literally couldn’t fail.

Vibe coding guy wrote unit tests for our embedded project. Of course, the hardware peripherals aren’t available for unit tests on the dev machine/build server, so you sometimes have to write mock versions (like an “adc” function that just returns predetermined values in the format of the real analog-digital converter).

Claude wrote the tests and mock hardware so well that it forgot to include any actual code from the project. The test cases were just testing the mock hardware.

Not realizing that should be an instant firing. The dev didn’t even glance a look at the unit tests…

if you reject her pull requests, does she fix it? is there a way for management to see when an employee is pushing bad commits more frequently than usual?

That’s weird. I’ve made it write a few tests once, and it pretty much made them in the style of other tests in the repo. And they did have assertions.

Trust with verification. I’ve had it do everything right, I’ve had it do thing so incredibly stupid that even a cursory glance at the could would me more than enough to /clear and start back over.

claude code is capable of producing code and unit tests, but it doesn’t always get it right. It’s smart enough that it will keep trying until it gets the result, but if you start running low on context it’ll start getting worse at it.

I wouldn’t have it contribute a lot of code AND unit tests in the same session. new session, read this code and make unit tests. new session read these unit tests, give me advice on any problems or edge cases that might be missed.

To be fair, if you’re not reading what it’s doing and guiding it, you’re fucking up.

I think it’s better as a second set of eyes than a software architect.

I think it’s better as a second set of eyes than a software architect.

A rubber ducky that talks back is also a good analogy for me.

I wouldn’t have it contribute a lot of code

Yeah, I tried that once, for a tedious refactoring. It would’ve been faster if I did it myself tbh. Telling it to do small tedious things, and keeping the interesting things for yourself (cause why would you deprive yourself of that …) is currently where I stand with this tool

and keeping the interesting things for yourself (cause why would you deprive yourself of that …

I fear that will be required at some point. It’s not always good at writing code, but it does well enough that it can turn a seasoned developer into a manager. :/

My company is pushing LLM code assistants REALLY hard (like, you WILL use it but we’re supposedly not flagging you for termination if you don’t… yet). My experience is the same as yours - unit tests are one of the places where it actually seems to do pretty good. It’s definitely not 100%, but in general it’s not bad and does seem to save some time in this particular area.

That said, I did just remove a test that it created that verified that

IMPORTED_CONSTANT is equal to localUnitTestConstantWithSameHardcodedValueAsImportedConstant. It passed ; )

Hahaha 🤣

Had a vibe coder who couldnt code himself a user authentication check (salted password sha hash) on a login screen.

deleted by creator

Businesses were failing even before AI. If I cannot eventually speak to a human on a telephone then the whole human layer is gone and I no longer want to do business with that entity.

Guy selling ai coding platform says other AI coding platforms suck.

This just reads like a sales pitch rather than journalism. Not citing any studies just some anecdotes about what he hears “in the industry”.

Half of it is:

You’re measuring the wrong metrics for productivity, you should be using these new metrics that my AI coding platform does better on.

I know the AI hate is strong here but just because a company isn’t pushing AI in the typical way doesn’t mean they aren’t trying to hype whatever they’re selling up beyond reason. Nearly any tech CEO cannot be trusted, including this guy, because they’re always trying to act like they can predict and make the future when they probably can’t.

My take exactly. Especially the bits about unit tests. If you cannot rely on your unit tests as a first assessment of your code quality, your unit tests are trash.

And not every company runs GitHub. The metrics he’s talking about are DevOps metrics and not development metrics. For example In my work, nobody gives a fuck about mean time to production. We have a planning schedule and we need the ok from our customers before we can update our product.

I keep trying to use the various LLMs that people recommend for coding for various tasks and it doesn’t just get things wrong. I have been doing quite a bit of embedded work recently and some of the designs it comes up with would cause electrical fires, its that bad. Where the earlier versions would be like “oh yes that is wrong let me correct it…” then often get it wrong again the new ones will confidently tell you that you are wrong. When you tell them it set on fire they just don’t change.

I don’t get it I feel like all these people claiming success with them are just not very discerning about the quality of the code it produces or worse just don’t know any better.

It is possible to get good results, the problem is that you yourself need to have an very good understanding of the problem and how to solve it, and then accurately convey that to the AI.

Granted, I don’t work on embedded and I’d imagine there’s less code available for AI to train on than other fields.

Yes, I definitely want to train a new hire who is superlatively confident that they are correct, while also having to do my job correctly as well, while said new hire keeps putting shit in my work.

Lowkey I think anyone saying LLMs are useful for work is telling everyone around them their job is producing mostly low quality work and could reasonably be cut.

I’ve seen this at work as well. The initial internal bot we had would give pretty decent info, would have sources, would say “I don’t have access to that” etc. Now it always gives plausible sounding answers. It uses sources that do not back up its conclusions. Then if I tell it the source does not say that, it will say it doesn’t know why it said that, that the answer “felt” correct. It was useful as a search engine but now not even that

Hahaha. Im guessing this guy works in developer tools. These types of metrics are great but you rarely get there. You will get a few of them but the reality is the same people who want to use AI to produce faster are the same people that won’t give you time to properly instrument your system for metrics like these. Good luck with your expectation that someone measures the impact of AI in a meaningful way.

Insurers, he said, are already lobbying state-level insurance regulators to win a carve-out in business insurance liability policies so they are not obligated to cover AI-related workflows. “That kills the whole system,” Deeks said.

If insurers are going through extreme lengths to remove AI output from the list of things they will insure, this says everything about its future.

Because nothing says “effective risk management achieved” like an insurer signing off on, or forbidding the insurance of, an entire class of materials.

It’s a canary in a coal mine, like how insurers are now removing any ability for Floridians to insure against hurricanes or sea level rise, despite flat earthers screaming their heads off that climate change is a conspiracy and isn’t real.

(Note: I have seen the term “flat earther” starting to be used as a catch-all term for anyone who vehemently denies reality in spite of copious evidence that shows they are wholly and completely wrong)

I wonder if it isn’t that AI is good, its that all other software is ass.

I use a patching software, antivirus, and backup software at work and they’re all now broken, after being patched. One is a 10.4B dollar company with a critical bug.

I once saw someone sending ChatGPT and Gemini Pro in a constant loop by asking “Is seahorse emoji real?”. That responses were in a constant loop. I have heard that the theory of “Mandela Effect” in this case is not true. They say that the emoji existed on Microsoft’s MSN messenger and early stages of Skype. Don’t know how much of it is true. But it was fun seeing artificial intelligence being bamboozled by real intelligence. The guy was proving that AI is just a tool, not a permanent replacement of actual resources.

Ask it which is heavier: 20 pounds of gold or 20 feathers.

They could be dinosaur feathers, weighting a pound each 🤷♂️

I asked Perplexity, and it first had the question incorrect indeed.

Then I said that it was not what I asked, and then it gave the correct answer:

“You’re right to push on the wording; as asked, 20 pounds of gold is heavier than 20 feathers.”

LOL! yea I did it just now. 😆

That was working the same in gpt one month ago. Do it, it is incredibly fun to see yourself.

Yea I have seen that happening. 😂

I find this hard to believe, unless it’s talking about 100% vibecoding

recent attempt to rewrite SQLite in Rust using AI

I think it is talking 100% vibe code. And yea it’s pretty useful if you don’t abuse it

Yeah, it’s really good at short bursts of complicated things. Give me a curl statement to post this file as a snippet into slack. Give me a connector bot from Ollama to and from Meshtastic, it’ll give you serviceable, but not perfect code.

When you get to bigger, more complicated things, it needs a lot of instruction, guard rails and architecture. You’re not going to just “Give me SQLite but in Rust, GO” and have a good time.

I’ve seen some people architect some crazy shit. You do this big long drawn out project, tell it to use a small control orchestrator, set up many agents and have each agent do part of the work, have it create full unit tests, be demanding about best practice, post security checks, oroborus it and let it go.

But it’s expensive, and we’re still getting venture capital tokens for less than cost, and you’ll still have hard-to-find edge cases. Someone may eventually work out a fairly generic way to set it up to do medium scale projects cleanly, but it’s not now and there are definite limits to what it can handle. And as always, you’ll never be able to trust that it’s making a safe app.

Yea I find that I need to instruct it comparably to a junior to do any good work…And our junior standard - trust me - is very very low.

I usually spam the planning mode and check every nook of the plan to make sure it’s right before the AI even touches the code.

I still can’t tell if it’s faster or not compared to just doing things myself…And as long as we aren’t allocated time to compare end to end with 2 separate devs of similar skill there’s no point even trying to guess imho. Though I’m not optimistic. I may just be wasting time.

And yea, the true costs per token are probably double than what they are today, if not more…

Once you set up a proper scaffold for it in one project, it’s marginally repeatable across other projects. If all you have is one project, that would be crap. Where this will disrupt and kill things is in cheap contract work.

If you’re trying to produce grade A code parallel to a grade A developer on a single project, it’s absolutely a losing battle for AI

But if you have unit tests and say go upgrade these libraries, test, then fix any problems and keep that loop until it all works. It’s about at the point where it can be servicable.

I’m betting tokens for development go up 50-100 times when it’s all done. I know that sounds shocking, but hear me out.

- Those datacenters, the ones that banks are starting to get cold feet one are hella expensive. We’re currently paying with monopoly money.

- The cost savings at scale are only as good as the idle cycles on a machine, and the development shift for a small project is eating up days of cycles for a single machine.

- Those mega-datacenter machines will only have a lifespan of a few years.

The AI companies’ bet is that they can get companies to fire enough developers to convert a decent percentage of salaries over to AI. They’re planning on Bob’s Discount Coders to fire people making 40-80k and long-term move 40k of that salary per head to them.

It’ll be like a streaming service where they’re paying $16 a month for claude and they’ll slowly enshittify the service until it’s a grand or two per month per head.

Step 1: Depress the market for coders at a loss, allowing companies to pay less and hoover up the extra money by firing people, which means less computer science in college, making a hole in the job market.

Step 2: Slowly crank up the features and cost until the prices are back where they were, but all the money is flowing to them.

I think you hit the bullseye with this.

Yeah it is, it brings up a lot of good points that often don’t get talked about by the anti-AI folks (the sky is falling/AI is horrible) and extreme pro-AI folks (“we’re going to replace all the workers with AI”)

You absolutely have to know what the AI is doing at least somewhat to be able to call it out when it’s clearly wrong/heading down a completely incorrect path.

“Codestrap founders”

Let me guess they will spearhead the correct way to use AI?

If they help bring reality into the conversation that’s fine by me.

it sure works well for slop marketers taking A/B testing to the new level of pointlessness.

It IS working well for what it is - a word processor that’s super expensive to run. It’s because there idiots thought the world was gonna end and that we were gonna have flying cars going around.

these types of articles aren’t analyzing the usefulness of the tool in good faith. they’re not meant to do a lot of the things that are often implied. the coding tools are best used by coders who can understand code and make decisions about what to do with the code that comes out of the tool. you don’t need ai to help you be a shitty programmer

they are analyzing the way the tools are being used based on marketing. yes they’re useful for senior programmers who need to automate boilerplate, but they’re sold as complete solutions.

Exactly. This reads like people are prompting for something then just using that code.

The way we use it is as a scaffolding tool. Write a prompt. Then use that boiler plate to actually solve the problem you’re trying to solve.

You could say the same for people using Stackoverflow, you don’t just blindly copy and paste.

REPENT! The end is nigh!

…no but seriously